The software market has grown exponentially over the past few years, and it’s great having so many people pushing for better software. But once we use version control, components, libraries, design tokens, animation… what’s next? There is a lot of work done, but how far could we go if the same tools that we use to design would be able to interpret code?

Transitioning time

Five years ago I was sharing PSDs with developers, and it’s impressive how many developers crossed the line learning to use tools like Photoshop to get direct access to design. Perhaps this is something to think about when we are the ones asked to cross the line.

teehan+lax were killing it during those years.

Photoshop, Flash, Dreamweaver, Fireworks… do these sound familiar? Designers have always found a way of being productive no matter the tools they have access to. It’s much more a question of attitude than tooling. However, there were obvious limitations on the nature of that software.

The first time I tried Sketch was in 2013. I decided to give it a try cloning a project that I had recently finished to compare what the new tool could provide me. After that exercise, I would never go back to Photoshop for UI design.

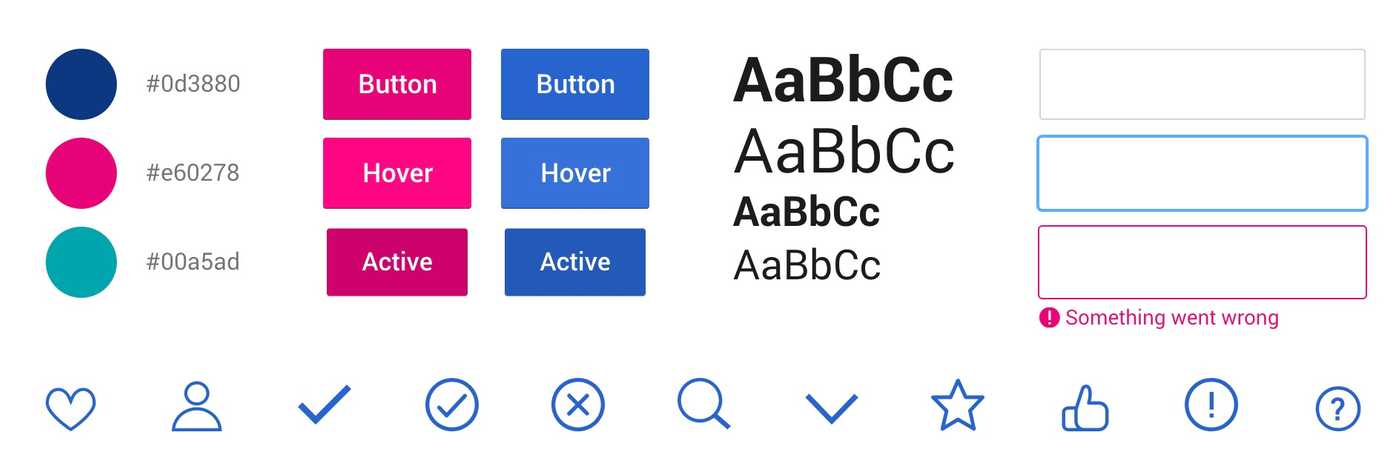

Naming conventions and design tokens helped designer and developers to talk the same language.

In the following years, tools like Zeplin or Invision made collaborating with developers easier, and a lot of tooling appeared around interaction and prototyping. At the same time some companies commenced to centralise design using design systems, the concept of atomic design emerged, naming conventions started to get more attention and things like the design tokens helped designers and developers to talk the same language. It was during this process of learning and iterating that I saw the benefits of having a better understanding of coding.

It’s in the small details where we spend the most time. Updating colours by masking and nesting components are just an example of how hacking solves some of the limitations that we find. But interfaces are complex; one small change like a line-height could affect the whole thing, and at some point, everything needs to be manually reviewed and tweaked to get things in place.

Jon Gold articulated a solution for some of the problems previously described in this post from 2017: Painting with Code.

If some tools allowed us to export code, the main idea was reverting this to convert React components into code. And this year is when some tools like Framer, UXPin and Figma are pointing in a similar direction.

The source of truth

Does sharing a single repository for both design and development make sense? I’m not even questioning if that’s possible, just wondering if that’s positive. My feeling is that even if we find it a bit restrictive at some point, it would make much easier having design and code in sync, and perhaps more importantly, having designers and developers directly in charge of the same product. It sounds challenging, but I’m totally in.

Tooling time: things I’ve seen so far

HTML-Sketchapp

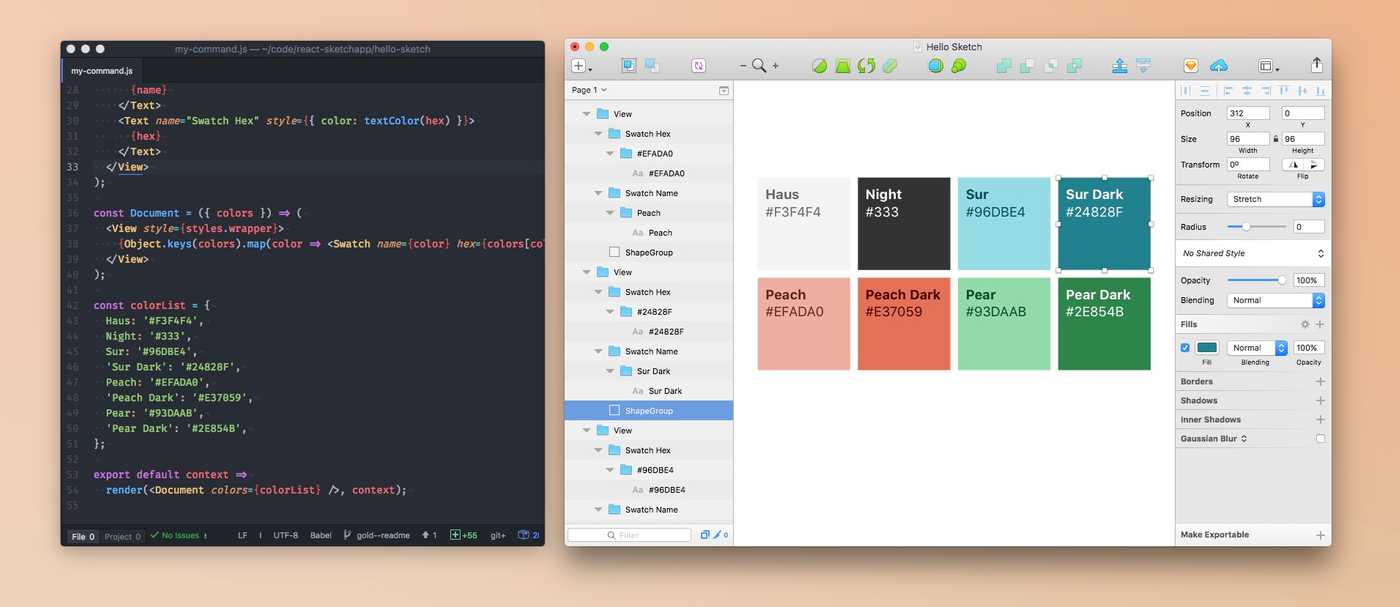

I tested a solution called HTML-Sketchapp with some good results. It uses React to draw a Sketch file. It’s not perfect, but it does the job.

A great example of what can be achieved with this would be the UI Kit created by Seek.

If you want to go deeper, have a look at Mark’s highly recommended post: Sketching in the browser.

React-Sketchapp

A project from Jon Gold that I mentioned before: React-Sketchapp

Designing with data is important but challenging; react-sketchapp makes it simple to fetch and incorporate real data into your Sketch files.

Figma API

Figma presented Figma’s platform a few months ago. This post touches on most of the points I’ve talked about.

This is the first time we’ve seen anyone create a real-time feed based on an online source of truth instead of a messy export flow.

Figma to React gives an idea of the potential of using open platforms.

Framer

Framer is one of my favourite tools. Many designers started to feel comfortable coding simple animations by using this tool. You can do a lot with basic concepts, and even play with real data.

I’m curious about the direction of this product after the next release: Framer X.

Framer X will be entirely based on ES6 and React. We will port the current Framer library to a set of really nice (optional) React helpers for animation, advanced gestures, and interpolation. All open source, of course.

— Koen Bok (@koenbok) July 25, 2018

UXPin

UXPin announced a version oriented to fill the design-code gap: “Design with live code. The real source of truth”.

Tool-agnostic dream

Which tool are you using? How much and how long would cost you to move into a new one? Once we start a project with one of them, it’s a long-term contract. There is a legacy decision about it that will keep you ahead or behind others based on the tool’s roadmap. It’s similar when choosing the technology that you will use on your project, but somehow new tools like Framer or UXPin makes me think about new possibilities.

Does this mean designers should code?

No. I think that having some knowledge about code is very important, but design is something other than visuals; there are many areas and programming is not key in all of them. But the tools that we use for UI design are the ones that should understand code.

Related links

- How to bridge the gap between Design and Development

- In web design, everything hard can be easy again

- What we don’t talk about when we talk about design tools

- Tokens in Design Systems

- Should Designers Code???? Part one, two, three and four.

- DesignOps Handbook.

- The evolution of tools

- Building Cross-Platform Applications with a Universal Component Library

- UX Tools.